First-Hand:No Damned Computer is Going to Tell Me What to DO - The Story of the Naval Tactical Data System, NTDS

INTRODUCTION

It was 1962. Some of the prospective commanding officers of the new guided missile frigates, now on the building ways, had found out that the Naval Tactical Data System (NTDS) was going to be built into their new ship, and it did not set well with them. Some of them came in to our project office to let us know first hand that no damned computer was going to tell them what to do. For sure, no damned computer was going to fire their nuclear tipped guided missiles. They would take their new ship to sea, but they would not turn on our damned system with its new fangled electronic brain.

We would try to explain to them that the new digital system, the first digitized weapon system in the US Navy, was designed to be an aid to their judgment in task force anti-air battle management, and would never, on its own, fire their weapons. We didn’t mention to them that if they refused to use the system, they would probably be instantly removed from their commands and maybe court martialed because the highest levels of Navy management wanted the new digital computer-driven system in the fleet as soon as possible, and for good reason.

Secretary of the Navy John B. Connally, a former World War II task force fighter director officer, and Chief of Naval Operations Admiral Arleigh A. Burke were solidly behind the new system, and were pushing the small NTDS project office in the Bureau of Ships to accomplish in five years what would normally take fourteen years. The reason behind their push was Top Secret, and thus not known even by many naval officers and senior civil servants in the top hierarchy of the navy. Senior navy management did not want the Soviet Union to know that task force air defense exercises of the early 1950s had revealed that the US surface fleet could not cope with expected Soviet style massed air attacks using new high speed jet airplanes and high speed standoff missiles.

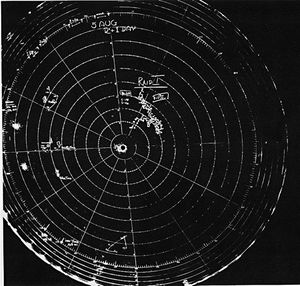

As of 1954 the US Navy, as well as every other navy, tied their task force air defense together with a team wherein most of the moving parts were human beings. Radar blips of attacking aircraft, as well as friendly airplanes, were manually picked from radar scopes and manually plotted on backlit plotting tables, Course and speed of target aircraft was manually calculated from the plots of successive radar blips of a target aircraft, and then written in by hand near the target’s plotted track. If air target altitude had been measured by height finding radar, or estimated by “fade zone” techniques, the altitude was also penciled in near the track line. If the target was known hostile, known friendly, or unknown, that information was also penciled in. And, an assigned track number was also penciled in.

No plotting team on any one ship in the task force could possibly measure and plot radar data on every raid in a massed air attack which might involve a few hundred raids coming at the task force from all points of the compass and at altitudes from sea level to 35,000 feet. Rather, as had been worked out in the great Pacific air battles of World War II, each ship in the task force measured and plotted the air targets in an assigned pie shaped wedge on their radar scopes, and their fighter director officers and gunnery coordinators controlled the fighter-interceptors and ship’s AA guns within that assigned piece of pie.

The plotting team on each ship also reported the position of each air target they were tracking over a voice radio network to all other ships in the task force so that each ship could maintain a summary plot of all air targets so that an air target passing from one pie wedge to another could be instantly identified and it’s track maintained by a new reporting ship. This manual plot was done with grease pencil on a large transparent plexiglass screen in each ship’s combat Information center (CIC).The plexiglass was edge lighted so that the yellow grease penciled track plots glowed softly in the darkened CICs. Wearing radio headsets, the sailors doing the plotting, wrote the target information in a reverse, mirror image style, so that fighter directors and battle managers sitting on the other side of the vertical summary plot could read the annotated grease pencil markings.

The fleet air defense management system had worked reasonably well during even the greatest ocean air battles of WW II. Secretary Connally did remember one massed Kamikaze air attack where the plotting teams were almost overwhelmed, and he had to forego his job as task force Fighter Director Officer and take control of a specific pair of interceptors to guide them to intercept a group of incoming Kamikazes.[Tillman, Barrett, “Coaching the Fighters,” U.S. Naval Institute Proceedings, Vol. 106/1/923, pp 39-45, Jan 1980, pp. 43-44]. The difference in the decade from 1944 to 1954 was new jet propelled aircraft that could travel almost twice as fast as their World War II counterparts. Manual plotting teams in post WW II shipboard combat information centers (CIC) just could not handle a massed attack of the new high speed jet aircraft, and in the minds of some senior Navy officials the future of the US surface fleet was in doubt. The radar plotting teams, the fighter directors, and the gunnery and missile coordinators needed some kind of automated help.

The first attempts to solve the fleet air defense management problem used massive electromechanical analog computing devices, and they didn’t work very well; primarily because their high count of moving parts made them unreliable. Next, the Navy tried electronic vacuum tube-based analog computers which did not work much better because they needed so many tubes. The final solution came from the Navy’s codebreakers who had, in great secrecy, been using digital computers to decrypt encoded messages. A fortuitous combination of two young naval engineering duty officer commanders, one of whom was an expert in radar technology, and the other of whom was not only highly experienced in wartime operational use of radar, but also had been in charge of designing and building the Navy’s codebreaking computers, resulted in their conception of the digital computer based Naval Tactical Data System in 1955.

In spite of the dependence upon three new immature technologies: digital computers, transistors, and large scale computer programming; and in spite of determined resistance by many senior naval officers, the NTDS project would later be acclaimed as one of the most successful projects ever undertaken by the US Navy. It would be the new science/art of computer programming that would almost bring the project to its knees, and it would be the reliability of the new digital equipment combined with a new breed of expert sailors, called Data System Technicians, that would save the project, and give the US Navy a powerful new capability it never had before .

FROM KAMIKAZES TO CODEBREAKERS, ORIGIN OF THE NAVAL TACTICAL DATA SYSTEM

Visions of Disaster at Sea

Legacy of the Divine Wind

For the most part they were just teenagers, they had been hastily trained, they were so vulnerable to the new American high performance fighters that more experienced fighter pilots were assigned to protect them. Also, many times their light Zero Fighters just bounced off their intended target ships, especially if they had forgotten to pull the arming lever of their 550 pound bomb. [Inoguchi, Capt. Rikihei & Nakajima, Cdr. Tadashi, The Divine Wind - Japan’s Kamikaze Force in World War II, Naval Institute Press, Annapolis, MD, 1958, Lib. Cong. Cat # 58-13974, pp 90-105] For example, on 16 April 1945, the destroyer USS Laffey was attacked by 22 Kamikazes and sustained three direct Kamikaze hits, as well as bomb hits, but remained afloat to fight again at the Normandy Invasion. AmongLaffey, her AA armed landing craft escort, and her overhead combat air patrol fighters, they shot down 16 of the 22 attackers; Laffey’s guns accounting for the bulk of the kills. [Becton, Radm. F. Julian, The Ship that Would Not Die, Pictorial Histories Publishing Co, Missoula, MT, 1980, ISBN 0-933126-87-5, p 245]

Even though the Allies destroyed the the Kamikaze airplanes in great numbers, they kept coming in great numbers, and they did considerable damage. By the end of WW II the estimate of Kamikaze damage was seventy one allied ships either sunk, or so damaged they could not be repaired, and these included a number of aircraft carriers. Another 150 ships had been so damaged that they had to be taken off the line for many months for shipyard repair. More than 6,600 Allied soldiers and sailors were killed in the Kamikaze attacks, and another 8,000 wounded. [Brown, David, Kamikaze, Gallery Books, New York,1990, ISBN 0-8317-2671-7, p 78]

More worrisome was the Japanese’s latest suicide weapon, the “Ohka” (cherry blossom) rocket boosted glide bomb. In its descent, it was much faster than the Zero Kamikaze fighter, and so, much harder to shoot down by fighters or ship’s guns. The Ohkas were “standoff weapons” slung under Mitsubishi G4M (Betty) twin engined bombers. On 12 April 1945 the first Ohka to be dropped in anger slammed into the US destroyer Mannert L. Abele, and broke its back. The ship went to the bottom in minutes. [Brown p 67] The Ohkas were to be launched about 11 miles from their target ships, and the Allies concluded that the best way to defend against the Ohka was to shoot down the carrying mother plane before it reached launch point for its ‘standoff weapon.’ [Inoguchi p 141]

The guidance mechanism in the Ohka was the most advanced mechanism in the history of the human race, a human being. In the years immediately following World War II, US Navy planners noted that even though future hostile nations might not resort to suicide weapons, great strides had been made in analog computing devices and precision guidance mechanisms, and that high speed, automatically guided, air launched standoff weapons could pose great danger to the US surface fleet.

The Postwar Fleet Air Defense Exercises

Even though in the years immediately preceding WW II the major warring powers had almost simultaneously, and secretly, developed rudimentary radar sets, Germany and Japan had not pressed their development. This seems to be primarily because the military leaders of both these nations believed in rapid, violent, aggressive conquests; whereas they considered radar to be a defensive weapon and not needed in their expected “short” wars of aggression. Furthermore, they expected their conquests to be completed before the time it would take to develop useful radar capability. It would not be until it was too late to significantly aid their war fighting that they realized they needed to put effort into radar development.

The Allies on the other hand, especially Great Britain and the United States, embraced radar and developed it to its fullest extent. By the end of hostilities the Allies not only had their original, but greatly improved, air search radars, but also precision surface search radars, and pencil beam fire control radars embedded in anti-surface and anti-air gunnery fire control systems. The biggest problem with U.S. naval radar in 1945 was that it produced an enormous amount of data but not enough information. The wartime U.S. Chief of Naval Operations, Admiral Earnest J. King, in October 1945 wrote a letter to the Chiefs of the Bureaus of Ordnance, Aeronautics and Ships expressing the fleet’s frustration regarding the prolific amount of data that radar was capable of providing. He noted that by the end of WW II: “The display of information was slow, complicated and incomplete, rendering it difficult for the human mind to to grasp the entire situation either rapidly or correctly and resulting in the inability to handle more than a few raids simultaneously,. Weak communications prevented information from being properly collected or disseminated either internally aboard ships or externally between ships of a force. He noted what is needed is; ”A method of presenting radar information automatically, instantaneously and continuously and in such a manner that the human mind ... may receive and act upon the information in the most convenient form; [plus] instantaneous dissemination of information within the ship and force.” [Bryant, William C., LT, USNR and Hermaine, Heath I., LT, USNR, History of Naval Fighter Direction, C.I.C. Magazine, U.S. Navy, Bureau of Aeronautics, April, May, and June 1946] This charge from Admiral King to the bureau chiefs meant that they were authorized to spend a lot of time, money and effort on solving the radar data data handling problem, but as we will see, for a decade, due to the lack of the right technologies in the minds of men with the right imaginations, the solution would just not be in hand. In the mean time, due to new turbojet engine technology which enabled attacking aircraft to fly even faster, the problem only got worse. “Whereas a Lancaster taking off gives the impression of tremendous power hauling a ponderous weight triumphantly into the air, a Mosquito taking off rather suggests a bundle of ferocious energy that the pilot has to fight to keep down on to the runway. The place to watch is from the end just where the Mozzie gets airborne. You see her starting towards you along the flarepath, just a red and green wingtip light. Soon you can distinguish her shape, slim and somehow evil, and suddenly she is screaming toward you just like a gigantic cat. A moment later she is past and thirty feet up in the air”. [Bowyer, Chaz, Royal Air Force - The Aircraft in Service Since 1918, Temple Press, 1981, ISBN 0 600 34933 0, p 101] <p>Even though the de Havilland D.H. 98 Mosquito was classified as a light bomber, it could carry a heavier bomb load than the American Boeing B-17 heavy bomber, and it could outpace most models of the Supermarine Spitfire, powered by its two Rolls-Royce Merlin piston engines. In addition to the unarmed bomber version, other Mosquito configurations were built including heavily armed night fighters and unarmed photo-reconnaisance aircraft. The photo-recon version was especially fast so that it could outrun any pursuing fighter in the Luftwaffe. [Sweetman, Bill, Mosquito, Crown Publishers, Inc., New York, ISBN 0-517-548542, p 12, p 21] That was until 25 July 1944 when Flight Lieutenant A. E. Wall piloting a photo-rec. Mosquito over Munich was accosted by a fast moving german aircraft having no propellers. Wall could not outdistance the new jet propelled Messerschmitt Me 262 no matter how much power he applied, and he escaped only by diving into an obscuring cloud bank. [Boyne, Walter J., Messerschmitt Me 262, Smithsonian Institution Press , Washington, D.C., 1980, ISBN 0-87474-276-5, p. 41] The jet age had arrived. Soon there would be a new generation of jet propelled attack airplanes capable of traveling twice as fast as their World War II predecessors.

The increased speed of the new jet propelled attackers was enough to cause great alarm for specialists in fleet air defense, but there was one small comfort, aeronautical scientists of the mid 1940s questioned whether a manned aircraft could fly faster than the speed of sound, which is approximately 761 miles per hour at sea level, decreasing to about 660 mph at 36,000 feet altitude. The scientists knew that there were controllability problems as aircraft speed neared sonic speed, and there were questions whether airplane structures would hold up to the heavy buffeting encountered at these speeds due to the airflow around the craft transitioning unevenly at different places on the airplane from laminar subsonic flow to compressible supersonic flow.

On 14 October 1947 Captain Charles E. Yeager dispelled these concerns when, at 20 thousand feet above the National Advisory Committee for Aeronautics’ High Speed Flight Station in the Mojave Desert, he was released from the bomb bay of a modified B-29 ‘mother airplane’ piloting the Bell XS-1 research airplane. As soon as he was clear of the B-29, Yeager switched on two the four chambers of his XLR11 rocket engine and climbed to 40 thousand feet where he was to make his speed run. At the planned altitude he switched on a third rocket chamber and within seconds his mach meter registered 1.06 times the speed of sound. He then shut down all rocket chambers and glided the XS-1 down to the dry lake bed next to the High Speed Flight Station. Yeager reported no control problems and only minor transonic buffeting. [Miller, Jay, The X-Planes X-1 to X-31, Aerofax, Inc., Arlington, Texas, 1968, ISBN 0-517-56749-0, p. 19] Controlled, manned supersonic flight was now a reality, and manned supersonic attack aircraft would soon follow.

The Royal Navy had also made great strides in naval radar during WW II and had a radar-based fleet air defense organization similar to the US Navy’s wherein search radar information was used to coordinate ship’s guns and airborne fighter-interceptors against incoming attack aircraft. In 1948 both the Royal and US Navy’s fleet air defense organizations were manually intensive with no automated support. Aircraft tracks were still manually plotted on plotting boards, airplane speeds were still manually calculated from successive radar blips, and fighter intercept vectors were calculated on sheets of paper maneuvering boards. Details such as raid height, identification, and track number were manually entered on status boards.

The Royal Navy in 1948 ran fleet air defense exercises where task forces were ‘attacked’ by multiple high speed jet aircraft coming from different bearings and at different altitudes. They found that, because of the high speeds of the incoming aircraft, the manual fleet air defense organization could adequately and accurately plot about 12 simultaneous incoming raids and effectively assign interceptors or ship’s guns to engage them. When the number of attacking raids (wherein one raid can be just one attacker or many airplanes flying together) exceeded twenty the air defense organizations fell apart. The inter ship voice radio data passing personnel, the manual plotters, the fighter directors, and the gunnery coordinators were overwhelmed. It did not matter how experienced they were, or how many of them were working together, they just could not adequately handle the incoming flood of radar data on attackers and friendly interceptors, not to mention keeping track of which interceptor or ship’s guns was assigned to which attacker. [Bailey, Dennis M., Aegis Guided Missile Cruiser, Motorbooks International Publishers & Wholesalers, Osceola, WI, 1991, ISBN 0-87938-545-6, p. 39]

In 1950 the U.S. Navy conducted similar fleet air defense exercises which simulated the expected massive air attacks expected of the Soviet Union on U.S. fleet units. High speed air attackers again came in from all directions and altitudes. The attacks were planned out ahead of time so that attack plans could be compared with the fleet units actually saw and recorded. The results were devastating; one fourth of the attackers were never recorded by any of the shipboard combat information centers. Even worse, of the attacking planes which were detected and plotted, the gunnery coordinators and fighter directors only assigned about 75% to guns or interceptors. If it was unrealistically assumed that every assigned interceptor or gun made a kill, about half of the attackers would have made it through to the defended fleet center without having been engaged by a defensive weapon. [Bailey p. 39] In real life, the surface fleet units would have been annihilated. For good reason, the exercise results were not publicized, and considerable research and development was focused on trying to correct the U.S. Navy’s inability to adroitly use the massive amount of radar data on high speed attackers that was available, but not able to be turned into useful information in a timely manner by fleet units. There were concerns at the highest level of Navy management that the U.S. surface fleet was no longer a viable force in being.

Towards a Dual Solution

The Guided Missile Systems

TALOS

The principal US shipboard anti-aircraft weapons of World War II were the 5- inch 38 caliber and 3-inch 50 caliber guns and the 20 and 40 millimeter (MM) automatic cannons. (“38 caliber” means the barrel was 38 times as long as the 5-inch bore of the barrel) The 20 and 40 MM cannons had a maximum effective aerial range of about two miles, the three-inch gun an aerial range less then six miles, and the 5-inch gun could effectively bring down aircraft at a range of a little over six miles. A well trained five-inch gun crew could maintain a firing rate of one projectile every three seconds, prompting awed opposing Japanese forces to believe the USN had an automatic five-inch cannon. [Roscoe, Theodore, United States Destroyer Operations in World War II, United States Naval Institute, Annapolis, MD, 1953, Library of Congress card No. 53-4273, pp 15-18]

By mid 1944 the US Navy was experimenting with various air launched standoff weapons and senior navy officials had every reason to believe that the axis powers were doing the same, which they were, as exemplified by later German guided glide bombs, and the Japanese human guided rocket powered Ohka bomb. Bureau of Ordinance (BuOrd) managers realized that new shipboard anti-air defense weapons having much greater lethal ranges than the six miles of the 5-inch gun were needed to counter the expected air launched standoff weapons. In July 1944 BuOrd tasked the Applied Physics Laboratory (APL) of Johns Hopkins University to recommend solutions,

The laboratory responded with the recommendation of a ship-launched guided supersonic missile powered by an air breathing ramjet engine. The missile would have a range of about 60 miles and would be guided by the launching ship using a precision fire control radar coupled to an analog fire control computer which would transmit steering commands to the missile. When the missile came within lethal range of its target a radar-based proximity fuse in the missile would detonate the warhead.

In January 1945 the Bureau tasked the Applied Physics Lab to begin work on developing a ramjet engine, the key unknown component of the proposed new guided missile. The project was given the code name “Bumblebee,” and on 19 October 1945 a Bumblebee ramjet test vehicle first exceeded the speed of sound. [Klingaman, William K., APL-Fifty Years of Service to the Nation - A History of The Johns Hopkins University Applied Physics Laboratory, The Johns Hopkins

University Applied Physics Laboratory, Laurel, MD, 1993, ISBN 0-912025-04-2, pp 21-33]

Even though the Japanese surrender on 2 September 1945 had ended World War II, the navy asked Johns Hopkins to continue work on Bumblebee at an urgent pace because of continuing concern about the the perceived threat from supersonic attack aircraft combined with standoff weapons. In April 1948 the Chief of Naval Operations directed that the lessons learned in the Bumblebee project should be focused on developing a complete long range guided missile system. At this time the Applied Physics Laboratory was engaged in what was called a “Section T’ contract with the US Navy, and all missiles developed under the contract were given a name beginning with the letter “T.” History does not seem to record how, or who, thus came up with the name “Talos” for the new ramjet powered guided missile project.

TERRIER

The ramjet missiles were complex and expensive, and numerous flight tests were required to test the supersonic aerodynamic performance of the vehicles and their guidance systems. To save money APL had come up with a simpler, less expensive, two-stage solid rocket propelled test vehicle, designated STV-3, to gather flight test data. Its guidance system was relatively simple in that it follwed the center of a radar,beam locked on the target. The “beam-rider” guidance was found to be vey effective, and APL proposed to the navy that, given a warhead, the STV-3 test vehicle could be a lethal medium range (about 20 miles) guided missile. In April 1948 BuOrd was under intense pressure from the Chief of Naval Operations to accelerate develpment of shipboard guided missiles. The bureau therefore jumped at the chance and directed APL to begin turning the STV-3 into a weapon system in parallel with continued development of the longer range Talos missile. The APL manager, Richard Kershner, of the new branch-off project, following the “T” for-first-letter convention christened the new missile “Terrier” in recognition of its tenacity in clinging to the center of its radar guidance beam. The Bureau of Ordinance contracted with Convair Corporation to build a run of Terrier test missiles under the technical guidance of the Applied Physics Laboratory. [Klingman pp 62-64]

TARTAR

The Talos missile was over 30 feet long and required large below-decks magazines to store a war load of missiles, not to mention the above decks space required for its missile launchers abd fire control radars. From the beginning BuOrd realized that only ships the size of heavy cruisers, or larger, could be fitted with the Talos missile system. The Terrier system, although less demanding of internal shipboard volume and topside space, still needed a seagoing launching platform having the displacement of a light cruiser.

In 1953 the Office of the Chief of Naval Operations issued a new operational requirement for a anti-air guided missile system small enough to fit on destroyer type ships. APL responded with a report proposing a new missile using many components of the Terrier missile, but having the booster and sustainer solid propellant rocket motors combined into one motor. The lab worked out a design whereby the deck mounted missile launcher and below decks missile magazine would be small enough to replace the forward five-inch gun mount and magazine in destroyers. The new missile system was named Tartar, and in December 1955, BuOrd awarded another contract to Convair Corporation to build a production run of Tartar surface-ro-air missiles having about ten miles lethal range. Again APL was charged with coordination and technical direction of the contract. [Klingman pp 102-104]

Early Guided Missile Ships

The guided missile, by itself, can be considered to be just the tip of the “iceberg” when it comes to a shipboard guided missile system. Other externally visible components were the missile launchers and the fire control radars. If we take the Terrier system, for example, the “twin arm” launchers had to be capable of receiving two missiles simultaneously from the below deck magazine, and then pointing the missiles at the required train and elevation position and then firing the missiles in eight tenths of a second after loading. The launcher then had to move back to loading position, receive two more missiles, and have them pointed for firing in 30 seconds.

Much later these articulated launchers would be replaced by vertical launchers capable of firing the missiles directly from their storage position in the magazines in newer guided missile ships. Each Terrier missile weighed about one and one half tons, and there was no time for manhandling them. The missile magazines had to be fully mechanized, and in early guided missile cruisers had to accommodate 144 Terriers in two magazines. [Moore, CAPT John, R.N., Editor, Jane’s American Fighting Ships of the 20th Century, Mallard Press, New York, 1991, ISBN 0-7924-5626-2]

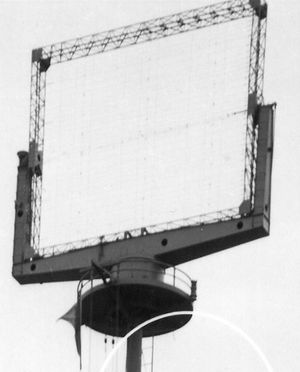

In the Terrier system, two missile fire control radar directors were specified for each twin-arm launcher. The fire control radar directors were not only precision hydraulically driven mechanical devices towering almost two stories high, but they also had to point a narrow pencil beam of radar at finely defined train and elevation angles which had to be constantly updated to compensate for ship’s roll, pitch, and yaw, not to mention constant compensation for target motion.

Each radar director, in turn, was controled by an electromechanical analog computer which was a marvel of precision electronics and mechanical ingenuity. Among its many functions, the fire control computer pointed the radar director at the expected position in space of a new target using various computer generated search patterns depending on whether, among other things, target altitude was known; or whether only target range and bearing data was available. Once the radar was ‘locked on” and tracking the target, the computer calculated future target positions in space as well as launcher orders to point the missile launcher at the computed point of intercept.

The usual source of new target data was from shipboard search radars which did not have the precision to generate a fire control solution, but had the advantage of showing all the air targets within a hundred miles ,or so, around the firing ship within a number of seconds. Of the air targets detected by the search radar operators, decisions had to be quickly made which ones were known friendlies, which were confirmed hostiles, and which were “identy unknown.” Of the hostile targets, decisions had to then be quickly made as to which ones appeared to present the most imminent threat to the defended area of the ship formation. These requirements necessitated even another component of the missile system, the weapons direction system (WDS).

The missile weapons direction systems can probably be credited as the earliest relatively successful attempt at radar data automation; however only for a limited number of targets - usually eight in the early shipboard guided missile systems. From a few repeated manual inputs of target range, bearing and elevation (if available) from search radars the analog tracking channels in the WDS began to compute target speeds and future predicted target positions which were fed back to the WDS operator’s radar displays. From this predicted target information, weapons control officers could make informed decisions as to which hostile targets appeared most threatening. A high threat target could then be fed to a missile fire control computer which would coach a radar director to lock on to the selected target.

Once locked on and automatically tracking the target, the fire control computer would compute a fire control solution, and, among other things, feed information back to the WDS radar displays showing when and where the target would be within firing range, and a recommended time to fire. It can be seen that, from this oversimplified description, that the proposed shipboard guided missile systems were extermely complex engineering challenges with a multitude of moving parts, all of which had to work in very precise concert with each other.

The World War II battleship USS Mississippi (BB 41) (then designated an experimental gunnery ship with new hull number EAG 128) was the first USN ship to receive a shipboard surface missile system. In this case Norfolk Naval Shipyard completed installation of an experimental Terrier system in Mississippi on 9 August 1952, and on 28-29 January 1953, her crew fired Terriers in successful tests off Cape Cod. [Navy Department, Office of the Chief of Naval Operations, Naval History Division, Dictionary of American Naval Fighting Ships - Volume III pp 820-821, United States Government Printing Office, Washington, D.C., 1964-1981 (hereinafter referred to as CNO Dict. Fighting Ships)]

Heavy Cruiser USS Boston was the first USN ship to receive a “production” surface missile system battery. New York Shipbuilding at Camden, NJ, began removing Boston’s aft 8-inch gun turret in early 1951, and completed installing two dual launcher Terrier batteries in the place of the gun turret in late 1955. The four-year installation period attests to the complexity and engineering challenges involved in the new missile system. [King, RADM Randolph W., USN, and Palmer, LCDR Prescott, USN, Editors, Naval Engineering and American Seapower, Nautical and Aviation Publishing Company of America, Baltimore, MD, ISBN 0-912-04-2, p. 288] Boston’s sister ship, USS Canberra completed a similar Terrier installation at New York Shipbuilding on 1 June 1956 to become the second guided missile cruiser.

Even though the ramjet powered Talos missile system was the first to start research and development, its progress wass slower due to size and increased complexity, but it caught with Terrier development in May 1959 when the light cruiser Galveston was recommissioned with a Talos missile battery in place of its former aft 6-inch gun turret. The World War II light cruisers Little Rock and Oklahoma City, Galveston’s sister ships, were also fitted with similar Talos batteries. From September 1959 to March 1960 three more WW II light cruisers, Topeka, Springfield, and Providence had their aft 6-inch gun turrets reolaced, this time, by Terrier missile installations.

The smaller Tartar missile system finally went operational when the US Navy’s first guided missile destroyer, USS Charles F, Adams, built by Bath Iron Works at Bath Maine, was commissioned at Boston Naval Shipyard on 10 September 1960. Adams was equipped with two 5-inch guns but with a twin rail Tartar system aft where the third 5-inch mount would have normally been. [Moore p. 170]. The last of the Word War II veteran cruisers to be converted to guided missile ships were the three heavy cruisers Chicago, Albany and Columbus. In 1959 naval shipyards began removing all of their 8-inch gun turrets to be replaced by Talos batteries fore and aft, and with two Tartar missile systems mounted on either side at midships. [CNO Dict. Fighting Ships Vol III p. 823]

The Guided Missile Frigates

By 1960, the U.S. Navy had run out of World War II veteran cruisers suitable for conversion to guided missile ships. More surface missile systems were needed at sea to provide air defense for the “heavies” at the protected center of the formation, but any new missile systems would have to be installed in new-construction ships built expressly for fleet air defense. The Talos missile system still needed a heavy cruiser sized platform because of the large volume of the missile magazines, and the Tartar systyem could be installed in new destroyer type ships. But, the Terrier system needed a ship larger than a destroyer, but not quite as big as a light cruiser. A WW II antiaircraft cruiser displaced about 8,000 tons, and a conventional destroyer displaced around 4,000 tons, wheras the Bureau of Ships calculated that a Terrier misslie ship would need a displacement of about 6,000 tons. [Blades, Todd, CDR, USN, “The Cruiser Rediscovered,” US Naval Institute Proceedings, Vol. 107/9/943, Sep. 1981, pp 124 125]

The US Navy thus envisioned a new ship type which, because it was significantly smaller than a cruiser, but much bigger than a destroyer, had to be given a new type name. They decided to call the new type‘ guided missile frigates.’ Every ship type in the US Navy has to have an abbreviated type lettering so in this case the first two letters came from the new larger Destroyer Leader type, or “DL.” Then a ‘G’ was was appended to indicate that the new type’s main armament would be guided missiles. Thus the new Terrier missile ships would be classified as DLGs.

Even though the new DLG’s main armament would be Terrier guided missiles, the new type would still carry a few 5-inch guns, and a mix of anti-submarine weapons as well as a state of the art sonar. As USN ships have always been, they would be ‘multi warfare’ ships but, in this case, with a specialty in shooting down airplanes. The Bureau of Ships ordered the first ten guided missile frigated, called the Coontz class, in November 1955. [Moore, p 167] When it came to managing the details of anti-air warfare the new guided missile frigates relied on the same manual radar plotting and paper maneuvering board techniques as their World War II ancestors. Still, the new ship type was in much demand, and as each new guided missile frigate class appeared on the BuShips drawing boards it was bigger in displacement, had longer range, and carried more armament than its predecessors. There were many, including this writer, who wondered why they just didn’t call these new DLG classes ‘cruisers.’ More about this later.

Automated Air-Battle Management Aids

An Elusive Goal

The other half of the solution to the post WW II fleet air defense dilemma was the development of automated air battle management aids to most effectively use the new surface missile systems, the ship’s guns, and the new jet propelled interceptors. In his book Memories of CDSC the story of the Royal Australian Navy’s Combat Data Systems Center (published by the RAN in 2009), David Wellings Booth describes the manual, human intensive, anti-surface, anti-submarine, and anti-air battle management process used by the RAN as late as 1970. The following process is representative of that used by almost every navy in their combatant ships prior to the advent of naval tactical data automation.

Plotting tracks in those pre-NCDS days involved a basic radar screen, two or three electro-mechanical plotting tables and rather terrible Bakelite sound-powered headsets. ‘The sound-powered communications units were very evil to use.’ These headsets were not used for surface/anti-submarine warfare (ASW) plotting although it was a very noisy environment. The surface and ASW pictures were compiled on plotting tables. The surface picture was plotted on tracing paper, which was on big rolls. The enemy was always marked in red and the friendly units in blue. Contacts were plotted every minute including visual bearing advice from the bridge. One piece of tracing paper would cover a 30-minute period of operations before the roll was advanced and another series of contacts representing the next 30 minutes was pencilled on. Left any longer, the tracing paper would become very cluttered.

The plotting tables had a horizontal surface through which was projected a graticle compass rose with range rings engraved on it. The graticle was interchangeable to allow for the different ranges over which plotting could be done. The ship’s gyrocompass and log drove the whole projection. A sheet of tracing paper was laid over the screen onto which was drawn the contacts observed by the operators.

Air plots were compiled on vertical magnetic boards, using metal symbols with magnetic strips on the back. It was quite difficult to keep an accurate picture due to the person reporting the air contacts being at a radar display, calling the range and bearing to the plotter. Even in the 1970s, aircraft were already traveling fairly quickly.

The air plot was located near the fire control system. With all plotting conducted manually, the surface and ASW plot records had to be retained for analysis after major exercises such as the ‘Rim of Pacific’ (RIMPAC) naval exercises. Operations during early RIMPACs were plotted manually. With NCDS [The Australians name for their suites of USN Naval Tactical Data System equipment was Naval Combat Data System], at least you could see the whole picture...During time on watch, a sailor would spend an hour on the radar display, an hour on the plotting table and then have a brew or relieve the air plot operator.

The plotting process was: find the track, mark it on the [radar] scope, call out the bearing and range of the track.

Experienced operators could call out new contacts plus their bearings and ranges at a rate of twelve a minute. These times included the more difficult task of plotting the points on the tracing paper and writing data upside down.

When tracking low-flying aircraft traveling at 500 or more knots, there was heavy reliance on information from the radars of other ships.

Even by the early 1950s it was very clear that the amount of information available from the shipboard radars was not the problem, rather the ability to assimilate the radar data and use it in a timely manner (in a fast changing environment) was the problem. From the above description, it can also be seen that, at best, the air, surface, and anti-air pictures could be developed as separate pictures by manual plotting teams, whereas it was critical to see all three pictures combined into one integrated situation plot.

Plotting the range and bearings of surface, subsurface, and air contacts (plus altitude, if available on an air contact) was just the beginning. It was next necessary to determine course and speed of each contact by plotting a few position points from successive sweeps of the radar, or sonar for submarine contacts, to ascertain course, and measure time interval between successive point to calculate speed. We must remember also that ‘own-ship’ was also moving so that these calculations became an exercise in vector algebra. One of the first things a midshipman or an ensign learned in those days was how to use a maneuvering board to make these vector calculations. The maneuvering board, in its simplest form, being a sheet of paper with a compass rose and range rings inscribed on it. The user could learn to rapidly lay out successive target range and bearing points on the board, lay out own-ship’s course and speed, and quickly calculate target course and speed. This writer remembers many hours spent as a Combat Information Center watch officer using a maneuvering board to calculate a new own-ship’s course and speed when own-ship was required to move to a new ‘station’ from the formation guide ship. One could get to the point of doing it with no conscious thought.

To make the plots minimally useful to weapons direction officers or air intercept controllers it was then necessary to annotate each plotted target track with calculated course and speed, identity (friendly, hostile, or unknown, type (air, surface, or subsurface), as well as an identifying track number. If the track was being reported by some other ship, the identity of that ship also had to be shown. Further, to avoid wasting weapons, it was critical to show if a given hostile target was already being engaged by another ship or by an interceptor under the control of another ship. Then, if a weapons direction officer could be presented with this information in one picture, it was up to him to determine which target appeared most threatening and determine what weapon, or interceptor, should be assigned to engage it.

If presented by only one, or maybe even a few, targets the above tasks could be reasonably well handled by a well trained plotting and weapons direction team. But the US Navy, as well as other allied navies, was concerned about possible massed Soviet air attacks, including long range standoff weapons. Reminiscent of WW II Pacific massed air attacks by the Japanese, possibly hundreds of air attackers might be involved. Not to mention keeping track of the disposition of perhaps a hundred friendly units. Some form of battle management automation was critically needed not only to do the above, but also rapidly perform and display the results of other supporting calculations such as vectoring commands to friendly air interceptors.

The need for naval tactical data automation critical and pressing. There was no question of there being an urgent operational requirement, and in the US Navy just about any amount of funds were available to innovators who thought they could solve the problem. The question was how to do it. Prophetically, the three most significant early attempts involved the use of barely emerging digital technology, and one of these (not even a US Navy project) almost succeeded.

Digital Technology to the Rescue?

Way Ahead of its Time, The Canadian DATAR System - 1949

Like the comedian Rodney Dangerfield, the Royal Canadian Navy felt it ‘don’tget no respect’. This was in reference to their experiences in providing convoy escort ships during the WW II Battle of the Atlantic. In spite of providing almost half of the escort ships, Canadian officers were hardly ever in charge of the convoys, instead the British or Americans usually provided the convoy flagships and convoy commanders; and gave the orders. Following the end of hostilities the rankled Canadians vowed that in any future armed conflict they were going to put themselves into a position of being asked to manage the convoys.

How were they going to do this? They were going to equip their escort ships with an automated system to help keep track of the ships, freighters and escorts in a convoy by radar inputs, and keep track of submarines through sonar and other inputs. Further the system would aid them in tracking down and attacking submarines, would help coordinate ASW operations among participating escorts and submarine hunter-killer groups, and even keep track of all aircraft in the convoy area. Such an automated system, they felt would give the Canadians preeminence in convoy management. They decided to call it the Digital Automated Tracking and Resolving System (DATAR). [Vardalas, John N., Moving up the Learning Curve; The Digital Electronics Revolution in Canada, 1945-70, Thesis submitted to the School of Graduate Studies and Research in partial fulfillment of the requirements for the Ph.D. degree in History, University of Ottawa, 1966, p 66.]

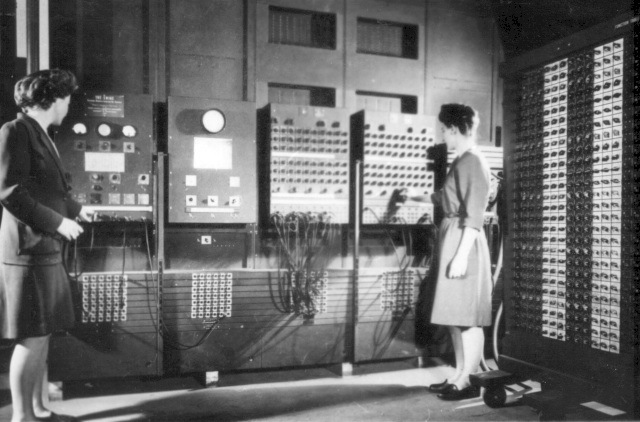

Even though, in 1948, there was not yet a commercially available digital computer to form the heart of such a system, the RCN began conceptual work on a system that could “capture extract, display, communicate, and share accurate tactical information in a timely manner.” [Vardalas, p 67]. The Canadians kept their eye on the progress of the US Army’s ballistics computing computer called the Electronic Numerical Integrator and Computer ENIAC, and on its successor the Electronic Discrete Variable Automatic Computer (EDVAC); which can probably be considered the prototype idea for all following general purpose, stored program digital computers.

As early as 1945, professors Frederick C. Williams and Tom Kilburn at Manchester University, England, were also keeping their eyes on the concept and progress of ENIAC and EDVAC, and by june 1948 were running a small general purpose, stored program digital computer based primarily on the EDVAC concept but using charged spots on electrostatic cathode ray storage tubes as the working random access memory instead of the mercury delay lines used in EDVAC. By April 1949, Williams and Kilburn were operating their full scale Manchester MARK I computer having not only electrostatic working memory, but also a rotating magnetic drum backup memory.

Some in the British Government began to take note of the MARK Is fairly substantial computing power and gave funding support to the company Ferranti Ltd. to build a commercial MARK I version of the Manchester University prototype. Ferranti delivered their first MARK I in February 1951, and it is believed by some computer historians to be the first commercially available general purpose stored program digital computer. [Metropolis, N., Howlett, J., Rota, Gian-Carlo, A History of Computing in the Twentieth Century, Academic Press, Inc., New York, NY, 1980, ISBN 0-12-491650-3, p 433]

In 1950 the Canadian Navy contracted with Feranti-Canada to provide three MARK I computers for the DATAR project with rotating magnetic memory drums as main working memory, and hardened to operate in a shipboard environment. Each computer used 3,800 vacuum tubes. Three test systems were built, each of which contained a computer, sonar and radar displays with the capability of inputting targets shown on the displays into the DATAR computer with a manual track ball, and an ultra high frequency (UHF) radio digital data link. Each system could process up to 64 targets, and could operate over an 80 by 80-mile tactical grid with a resolution of 40 yards. Two of the systems were installed in the RCN Lake Ontario minesweepers, Digby and Granby, and the third was installed in a shore based test site in their Ottawa laboratory [Friedman, Norman, The Naval Institute Guide to World Naval Weapons Systems, 1991/92, Naval Institute Press, Annapolis, MD. 1991, ISBN 0-87021-288-5, p. 49]

In 1950 the RCN proved out the UHF digital data link in shore-based tests, and began system tests with the two minesweepers in August 1953. There were bugs an challenges which had to be overcome, but the Canadians got the system working to their satisfaction. There was every reason to believe the RCN would never again have to play second fiddle to the British and Americans in convoy management. The seagoing systems practically filled the after half of the small minesweepers, and due to the large number of vacuum tubes in the electronics, in particular the MARK I computer, overheating was found to be a major problem. According to one account a fire destroyed the system in one of the test ships, and the RCN could not get the funds to rebuild the system and continue development. [Swenson, CAPT Erick N., Stoutenburgh, CAPT Joseph S., and Mahinske, CAPT Edmund B., Draft of NTDS - A Page in Naval History, dated 29 Sept. 1987]

DATAR historian John N. Vardalas notes that he could find no record of the destruction of one of the shipboard systems by fire, but rather that the Canadian Government just could not afford to continue DATAR funding after 1956. In any event to the Royal Canadian Navy, who had made themselves the world leader in automated naval combat direction systems, it was a great loss and a great naval tragedy. There is no reason to believe that with more routine testing and development that DATAR would not have been fully successful. Fortunately for the US Navy, the leading engineer on DATAR, Mr. Stanley F. Knights provided valuable consulting support to the later USN Naval Tactical Data System Project beginning in 1956. [Swenson p. 5]

For a more complete discussion of DATAR history the reader may click on the heading of this section to read John N. Vardalas’ complete article on DATAR.

It was 1931 and the bottom of the great depression. Irvin L. McNally had just graduated from the University of Minnesota with a brand new bachelor’s degree in electrical engineering, but neither he nor any of his classmates were able to land a job in their chosen field. Fortunately for McNally he had six weeks of guaranteed work before him; in the US Army Signal Corps. This was his obligated service payback as an Army Reserve Officer’s Training Corps student. McNally researched the possibilities of gaining civilian employment in the Signal Corps, and found there were none. His boss, Captain H. C. Roberts had recently worked with his counterparts in US Navy communications and was impressed with the advances the navy was making in communications. Roberts suggested that McNally’s best career route might be to get into navy communications as an enlisted man, and weather the depression while learning new communications technologies.

In 1931, even enlisting in the navy was not easy. Only a few navy recruiting quotas were allocated to the Ninth Naval District, which included Minnesota, but McNally was able to secure one. In February 1932, following his graduation from ‘boot camp’ at Great Lakes, Illinois, he received orders to attend the Navy Radio School at San Diego, California. McNally had reason to celebrate that his career plans were beginning to shape up well, but his new C.O. at the radio school had a curve ball ready for him. With his degree in electrical engineering, as well as being a licensed ham radio operator, the C.O. thought Mcnally was a natural to be an instructor rather than a student. McNally pleaded that he had come into the navy to learn new technologies rather than teach what he already knew. He wanted to complete the school and go to sea in a working communications billet. The C.O. was mystified that his new student would rather go to sea than have a nice cushy four year tour of teaching; but he relented.

Upon graduation, McNally was granted his wish in spades by receipt of orders to the fleet commander’s communications staff aboard the Battleship Pennsylvania, having the largest and most diverse communications suite of any ship in the Pacific Fleet. He served aboard Pennsylvania for four years, by the end of which he had gained both a wealth of practical experience and new theoretical knowledge of radio and electronics; furthermore by 1936 he had risen to Radioman First Class. His term of enlistment was coming to a close, but he could see another route upward through the navy. He had worked with men called warrant officers, and he was very impressed by these commissioned officer technical specialists. He wanted to be one.

The route to warrant officer was not easy. He would have to take a rigorous written exam in his area of specialty to compete for one of the few quotas for Radio Electrician Warrant Officer. But even before he could take the exam he had to complete another prerequisite: graduation from Radio Material School given by the Naval Research Laboratory (NRL) at Washington, DC. This required the endorsement by Commanding Officer, Pennsylvania, and temporary additional duty orders to attend the school. McNally’s bosses supported his request, and by 1936 he had orders in hand to proceed to NRL. Again, at the laboratory, the school administrators’ first impulse after reviewing his qualifications was to make him an instructor. This time they compromised, he would teach courses in areas he already knew, and would take courses where he felt he needed more education.

In addition to the Radio Material School syllabus, McNally was able to learn the basics of American naval radar by its inventor Dr. Robert M. Page, and he took further courses on sonar and the Adcock radio direction finder. He was back aboard Pennsylvania by late 1936, and took the week-long Radio Electrician Warrant exam in 1937. The technical questions and problems were college level, and he was sure he never would have survived the exam without his degree in electrical engineering and the tour at Radio Material School. He was glad he had been captain of his AROTC drill team at U of Minnesota because the exam even had questions on close order drill, as well as recent news happenings, history, geography, and internal combustion engine theory. McNally not only passed the exam, but also became a Warrant Radio Electrician in December 1937.

In January 1938, McNally received orders to detach from USS Pennsylvania and proceed to Cavite, Philippines, to join the Sixteenth Naval District staff. His principal duty was supervision of the installation of radio listening stations at American consulate offices throughout China. Their purpose being to listen in on Japanese military radio traffic. He also installed a radio listening station at the US Army’s Corregidor fortress, and made a number of army friends in the process. He later found that many of these new friends died at the hands of the Japanese in prison camps.

In June 1940 McNally was ordered back to the Naval Research Laboratory to a course of study at the Warrant Officer’s Engineering School. The principal subject of the course was the new technology of radar. He was to learn radar in classes taught by the inventors of US naval radar: Dr. Page and his principal scientists Dr. A. Hoyt Taylor, Leo C. Young, Robert C. Guthrie, Arthur A. Varla, and Dr. Merril Distad. He learned not only radar theory, but also learned the practical engineering and construction details of the navy’s new Model XAF 200 MHz air search radar, the prototype of which had been designed by his instructors and was still at the lab as a research and instructing device.

At the end of one of his radar classes, Dr. Page invited McNally, whom he thought was taking an unusual interest in the subject, to sit in on a radar briefing by some visiting British radar scientists. Page explained that he had been directed to reveal all his radar research findings to these members of the British ‘Tizzard’ mission, and in return they were going to do the same. He indicated that McNally might lean something new. McNally did! He became one of the first Americans to learn of, and see, the new British ‘magnetron’ tube which was capable of producing centimeter wavelength radio waves at high power. This had been a goal that eluded NRL scientists for years. The British were amazed to learn that NRL, by inventing a simple duplexing switch, had been able to develop a radar that needed only one antenna, whereas their radars needed separate antennas for transmitting and receiving.

Following the NRL classes McNally and the other students attended lectures given by the US contractors who were building the first production naval radars. These were given on site at: Bell Laboratories, Westinghouse, General Electric, and RCA. More lectures followed at the Massachusetts Institute of Technology, and by British naval radar scientists after the Tizzard mission. In June 1941 McNally received orders to stay on at NRL as part of the teaching staff, and he was directed to help in preparing a syllabus for the first radar training course to be given to US naval officers. With four other warrant officers, McNally gave the course to 59 student officers. A young ensign, Edward C. Svendsen, was among the 59. Upon completion of the course, he was to return to the battleship Mississippi where he would become the ship’s first radar officer; and we have not heard the last of Svendsen.

When McNally had attended the NRL Radio Material School in 1936, Commander Daniel C. Beard had been Officer in Charge. Beard, in the summer of 1941, was at Pearl Harbor, Hawaii, assigned to the staff of the Commander-in-Chief, Pacific Fleet as fleet electronics officer. There was a severe shortage of radar technicians to service the growing number of search radars being installed in Pacific Fleet major combatants, and one of Beard’s functions was to establish a Pacific Fleet Radar Maintenance School. He developed a list of officers and warrant officers he felt would be qualified to establish and run the school, and McNally’s name kept coming to the top. Consequently, McNally soon received orders to proceed to Pearl Harbor to get the school started. On 1 November 1941 he and his wife Gracie started their drive to San Diego where Gracie would stay with her family until McNally had arranged quarters for them in Hawaii.

On 1 December 1941, McNally reported in to the chief of staff at CINCPAC headquarters, and then began seeking a temporary place to live until he had arranged for quarters for himself and Gracie. He had assumed that there would be plenty of room at the Pearl Harbor Naval Base bachelor officers quarters, and there were; however he learned one of the realities of navy life. The BOQ was not authorized to house warrant officers, and there was no such thing as separate quarters for bachelor warrant officers. He was on his own. Out of the possible living arrangement alternatives he reasoned that there were plenty of unused bunks in the warrant officer countries of the major combatant ships tied up at the naval base. He found that the battleship Pennsylvania, his old ship with plenty of warrant officer friends on board, would temporarily be in Dry Dock 1, and Warrant Electrician Brown would be pleased to share his room.

Commander Beard was authorized to pick just about any suitable site he could find to build the radar school. He and McNally found a good location near the old coaling docks, oil instead of coal now being used in navy ships, and McNally began sketching out plans for radar installations and classrooms. On Saturday 6 December he found an apartment in Honolulu and wired Gracie to book passage for herself and their possessions to Hawaii. It would never happen.

At 0730 Sunday morning, McNally was up and around in Brown’s stateroom getting ready to visit friends in Honolulu. He was going to borrow on of Brown’s civilian hats and was trying it on when the battleship shuddered violently on its docking blocks. He peered out of the stateroom porthole to try to find out what was happening, and just as he did a torpedo bomber with red disk markings passed by, barely clearing the roofs of the shops along the dock. There was no question that Japanese airplanes were attacking Pearl Harbor. Any time the ship was at ‘general quarters’ the warrant officer’s mess was to be manned as a battle dressing station, and since he had no other ‘GQ’ assignment McNally proceeded to the mess to volunteer his services. On his way he saw that the harbor was full of flaming and exploding ships, with a least one ship, the battleship Oklahoma, rolling over.

The doctor in charge of the dressing station asked McNally to go below and get a supply of battle helmets, and just as he was picking up the helmets he heard a terrible explosion overhead. By the time he got back to the dressing station he found that a direct bomb hit had turned it into a shambles and most of the men manning it were either dead, including the doctor, or were severely wounded. Following the doctor’s orders had literally saved his life; and he would live to perform a great service to the navy in return. He and other survivors converted mattresses into litters and dragged the most severely wounded to the sick bay. The explosions began to subside, and McNally went topside to survey the damage. The destroyers Cassin and Downes were in the dry dock forward of Pennsylvania, and were afire and exploding. Shipyard workers had to, flood the dry dock to help quench the fires.

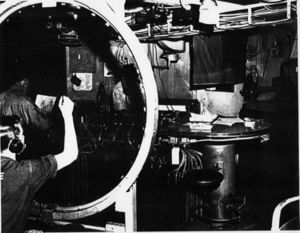

Pennsylvania was dispatched almost immediately to a mainland shipyard for repairs and McNally found new quarters with a marine warrant officer friend, but before he could get started again he was diverted on 13 December to the aircraft carrier Lexington where he was told to report immediately to the commanding officer, Captain Frederick C. Sherman. His orders to McNally were simple and direct. “Get my CXAM-1 air search radar working.” It did not take much troubleshooting to find the main problem with the radar. Unfortunately the trouble was not in the radar room, but rather in the antenna train motor which was mounted 100 feet up on top of the front of the ship’s smoke stack. There was no time to wait for daylight, so he and two enlisted volunteers climbed in the pitch black darkness in stifling stack gas to dismount the ponderous train motor and lower it to the flight deck.

McNally found that the motor’s gear case had a ruptured oil seal which had not only short circuited the train motor but in the process had produced enough intense heat to demagnetize the motor’s permanent magnet pole pieces. In normal conditions McNally would have told the CO that he would need to get new pole pieces, which were not included the the ships spare parts list because of the extreme improbability of ever needing such parts. He had to improvise, and improvise he did. He calculated the number of turns of magnet wire he would have to wind around the defunct magnets to turn them into electromagnets having the proper strength when fed from the motor’s direct current power supply. It worked in the shop and they went through the arduous task of remounting the motor atop the stack.

There was still more damage to repair when Lexington steamed out of Pearl Harbor with McNally still aboard. The amplidyne controller which controlled speed and direction of antenna rotation was damaged beyond repair , and he had to make a jury rig controller out of flashlight batteries and a potentiometer. It worked too, and his final task was to give the radar set and its power tubes a good cleaning, grooming, and tuning. He reported back to the skipper to tell him the radar was working. CAPT Sherman replied that he was authorized to keep McNally on board as long as he wanted him, and his only job was to keep that radar working.

There is a lot of evidence, such as the above, to show that in spite of senior US naval officer’s general reputation at that time to resist technical innovation, there was no such resistance to radar because most could immediately see the immense value of radar, regardless of the fact that its powerful emanations told an enemy just where your ships are. McNally replied to the skipper that he had another job in addition to keeping the radar working. Sherman was in no mood for back talk from a warrant officer, and he testily asked “and just what would that be Mr. McNally”? McNally’s response was “that would be to teach your men to do the same thing Captain.”

Lexington returned to Pearl Harbor on 27 December, and by that time McNally had trained two radio electricians and two chief petty officers to the point that they were fully qualified to maintain the radar. Thus he had completed a little part of his mission to set up a radar maintenance school. That was good because the next day Commander Beard told him he had another radar emergency. This time it was 12 Catalina flying boat patrol planes that had just been delivered from the mainland, were at Kaneohe Naval Air Station, and were practically useless because every one of their British-built ASV airborne air search radar sets were inoperative.

This time, with Commander Beard’s influence, McNally was lodged in the station bachelor officer’s quarters, and was supplied with some enlisted technician assistants. By early afternoon of 28 December they had removed one of the radar sets from a Catalina and set it up on a bench in the electronics shop. McNally hesitated to apply power to the set until he knew how it worked. There was not a shred of documentation with the sets so he spent the rest of the day tracing circuits and drawing electronics schematics. By the end of the day he had powered up the unit and had the electronics working, but only the weakest of signals were coming from the antenna which had also been removed from the airplane. He began to suspect a mismatch between the set’s operating frequency and the size of the antenna.

With no documentation, or any other clue as to the radar’s operating frequency, he had to find a way to measure the frequency. This he did by fashioning an open-wire transmission line fed by the radar output. With a neon bulb he was able to find the peaks in the wire’s standing wave and thus determine the set’s wavelength. It was a complete mismatch with the size of the antenna. The sets on the 12 Catalinas had apparently never been tested after installation. Now that he knew the correct operating frequency (176 MHz) it was a pleasant exercise to design a new antenna. The naval air station sheet metal shop built the antennas and installed them. Then he found that none of the Catalina’s radio technicians had the foggiest idea how to either operate or maintain their new radar sets.

CDR Beard told McNally to stay with the squadron and start his training school there. This he did in a shore-based classroom as well as taking a day’s patrol flight with each crew to give them practical instruction in operation, trouble shooting and maintenance. In the process he built up 150 flying hours, with no flight pay of course. The work on the Catalinas lasted until the end of February 1942, when he was finally free to get back to the radar maintenance training school construction. This was not going to be as easy as he thought. Even though the construction was going to be technically relatively straightforward, and he knew exactly what he wanted, he found his problem was bureaucracy, red tape, and rank discrimination. The local public works department could not conceive that a warrant officer could possibly have enough authority to be in charge of constructing new school facilities and they gave him less than faint hearted support.

One complaint to CDR Beard was enough to have the public works department fired from the job with a stinging censure, and Beard rounded up a Navy Construction Battalion (the Navy’s fighting SeaBees) with some time on its hands. The cooperative SeaBees set to work with alacrity, and on 1 May 1942 the school was up and running. As of June 1943 the school had been in operation for a year with instructors and staff that McNally had trained himself. Also, to bring his rank a little more in line with the responsibility of running the school, McNally had received a full officer’s commission and had been spot promoted to Lieutenant junior grade, and a few weeks later to full Lieutenant. Things were going very well, but this was not to last; and you might say that what was about to happen next was due to his own ‘misconduct’, or better still to his exemplary good conduct.

MCNally made it a practice to visit ships returning to Pearl Harbor after deployments. He would meet with the radar officers and technicians to find how their radars were doing, discuss problems, solicit new features they would like to have, and give them advice. He visited every submarine he could to ascertain how their new Model SJ surface search and targeting radar sets were doing. The submariners appreciated his help, and introduced him to Vice Admiral Lockwood, Commander of Pacific Fleet Submarines, who not only expressed his appreciation but also visited McNally’s school from time to time.

The submariners told McNally they loved their SJ radars because they had been able to make what would otherwise have been impossible kills with it. They did have one reservation: they had to bring their boats close to the surface and poke the rotating antenna above the water. The antenna could easily alert an enemy to their presence and exact location. They asked him, was it possible to build an antenna for the SJ that was small enough that it could be mounted on the night periscope? They showed him the periscope, and McNally saw that there might be just room enough to mount a small antenna. Building a wave guide with a joint that would allow the periscope to be pointed in any direction would be a real engineering challenge however.

McNally worked up a small slotted wave guide antenna and tried it out strapped to a periscope; but without the needed wave guide joint. They took the temporary rig to sea and found that the SJ radar, with the small antenna, could detect moderately sized ships at ten mile range with range accuracy of 15 yards. The primary drawback was the large 20-degree beam width brought on by the very small antenna aperture. They determined, however, that if they rocked the antenna back and forth, they could discriminate in bearing down to one degree accuracy. In a June 1943 visit to the radar school VADM Lockwood asked McNally about a radar antenna that could be attached to an optical periscope. His response “I think we have the answer admiral” astounded Lockwood.

McNally showed him the small antenna and told him of the results they had obtained so far. VADM Lockwood’s next action was to pick up a phone and make an urgent call to Commander-in-Chief, Pacific Fleet. He then told McNally to start packing his things and be at the Pan American Clipper dock the next day for a trip to San Francisco. From San Francisco he journeyed by train to Washington, DC, where he reported in to the Radar Design Branch of the Bureau of Ships. His assignment, to complete the design of his periscope antenna, in particular the wave guide joint, and get the antenna into production. With the help of Bell Laboratory scientists the antenna design was perfected and given the designation; ‘Submarine Periscope Radar Equipment Model ST.’ Even with the antenna in production, there was no way McNally was going back to his school at Pearl Harbor. Within a few weeks after his reporting in to BuShips he was spot promoted to Lieutenant Commander, and not much later he took charge of the Radar Design Branch. With his promotion, the Bureau of Naval Personnel also changed his designator to engineering duty officer (EDO).

As of 1949 McNally had been in charge of the branch for seven years and had a number of radar developments under his belt. The billet of Radar Program Manager at the Navy Electronics Laboratory (NEL), San Diego, was soon to be vacant, and was considered to be the next step upward for McNally. In mid summer he reported in to the laboratory, which was then under the command of Captain Rawson Bennett, an EDO and formerly in charge of the BuShips Sonar Design Branch during WW II.

At NEL, McNally had a chance to work through some of the ideas he had while in charge of the Radar Design Branch. He felt that radar technology had been fairly well developed and even though there were many more avenues for further radar improvement and development, the highest payoff in radar research and development lay in devising ways to better use the massive amount of data available from radar. He could see that human radar plotting teams were often overwhelmed and could effectively use only a small fraction of the data presented to them. They obviously needed some sort of automated aids to make better use of existing radars.

McNally reasoned that the first step should be a device that could simultaneously record’ store, and display the range and bearing of a number of contacts in the form of electronically generated symbols. Then it should build up a visible track of each each contact as operators picked their new positions off the radar scope after each sweep. That wasn’t all. It should then calculate the course and speed of each target and show these on the display. A vector line emanating from the contact symbol pointing in the direction of the course with length proportional to speed seemed to be a good way to do it. Finally, he wanted to take this entire electronically generated picture of target symbols, their past tracks, and their speed/direction vectors and overlay it back over the raw video picture so that operators could see how well the artificial symbols matched up with their raw video ‘blips’ as the targets proceeded across the scope.

Laboratory engineers Everett E. McCown and R. Glen Nye were already ahead of LCDR Mcnally in some aspects of his idea. They had been designing radar and sonar simulators as training aids. These devices could generate a clear tactical picture of as many as eight simulated targets symbols on a simulated radar scope. McNally asked them if they could lay that same picture on the face of a live radar scope in company with live radar blips. They could, and they found a way to pick off and measure the coordinates of the live video target blips using a joystick of McNally’s design using electrical potentiometers. They could also show the past track and the speed /course vector.

Three problems shadowed them, however; the device could accommodate no more than eight or ten targets, it was bulky, and not very reliable because of the number of electromechanical potentiometers, capacitor storage banks for target coordinate voltages, and motor driven scanners involved. Even though they had demonstrated McNally’s idea, they were not satisfied that it could be an operationally useful device; bulk and reliability being the main concerns. All three investigators had some familiarity with the emerging field of digital computers, and they reasoned that digital technology might be a way to cure the problems with the device. McCown and Nye had recently audited a course in digital technology given by Dr Harry D. Huskey at the University of California, Los Angeles (UCLA), where he had started work on designing and building the Bureau of Standards Western Automatic Computer (SWAC).

With Dr. Huskey’s help, the three investigators designed a small special purpose digital computer having the capability to add, subtract, multiply, divide, and store target coordinates as binary numbers in digital registers. (No digital computer was available commercially in 1950. this was the era of do-it-yourself digital computers.) Ancillary to their digital computer they fabricated analog-to-digital converters to input the target coordinate voltages picked by McNally’s joystick from the radar scope ito the computer input target coordinate storage registers, and they built digital to analog converters to transmit newly computed target symbol positions back to the synthetic tactical picture overlay. The computer worked, and they named it the Semi-Automatic Digital Analyzer and Computer (SADZAC), and the entire system they called the Coordinated Display Equipment (CDE).

[McNally, Irvin L., Letter to D. L. Boslaugh, 30 March 1993]

[McNally, Irvin L., Interview with D. L. Boslaugh, 20 April 1993]

[McNally, Irvin L. Letter to D. L. Boslaugh, 17 October 1994]

Digital Disappointment, The Semi-Automatic Air Intercept Control System - 1951

In 1951 McNally felt the device was ready for a shipboard evaluation as a radar data processor, with the added capability of doing interceptor control vectoring calculations, and he briefed his sponsors in BuShips’ Radar Design Branch. Their concern was the device’s limited track capacity, coupled with the concern that a vacuum tube based digital computer powerful enough to handle a realistic tactical tracking load of perhaps a hundred or more targets would be too massive for shipboard use. BuShips elected to try the concept on a more limited application, air intercept control, where at a minimum the system has to keep track of only two aircraft: the target and a controlled interceptor.

The Bureau contracted with with Teleregister Company to use the concepts of the Coordinated Display Equipment in an electronic digital automated radar data plotting and interceptor control vector computing device. The Navy Electronics Lab CDE team was tasked to provide technical,direction to Teleregister in designing and producing the resultant the Semi-Automatic Air Intercept Control System. Even though the concept was viable in theory, and expert laboratory engineers could make it work in the lab environment, it appears that commercial digital technology was not yet sufficiently mature to build a reliable tactical device, and Teleregister never finished the contract. It was a valuable learning experience and a useful step toward a viable solution.

[Graf, R. W., Case Study of the Development of the Naval Tactical Data System, National Academy of Sciences, Committee on the Utilization of Scientific and Engineering Manpower, Jan. 29 1984, p. III-1]

[Swenson, CAPT Erick N., Stoutenburgh, CAPT Joseph S., and Mahinske, CAPT Edmund B., “NTDS - A Page in Naval History,” Naval Engineer’s Journal, Vol. 100, No.3, May 1988, ISSN 0028-1425, p. 54]

We Can Do it With Analog Computers

Digital radar data processing was understandably shelved for a while in favor of more tries with analog computing systems. The Royal navy was under just as much pressure as the US Navy to find a way to automate the handling, manipulation, and display of radar data, and elected from the start to try an analog computing approach. In 1951 the RN issued a contract to the electronics firm Elliot Brothers, Ltd. to develop and produce such a system for major combatant ships. They named it the Comprehensive Display System (CDS).